Foundation

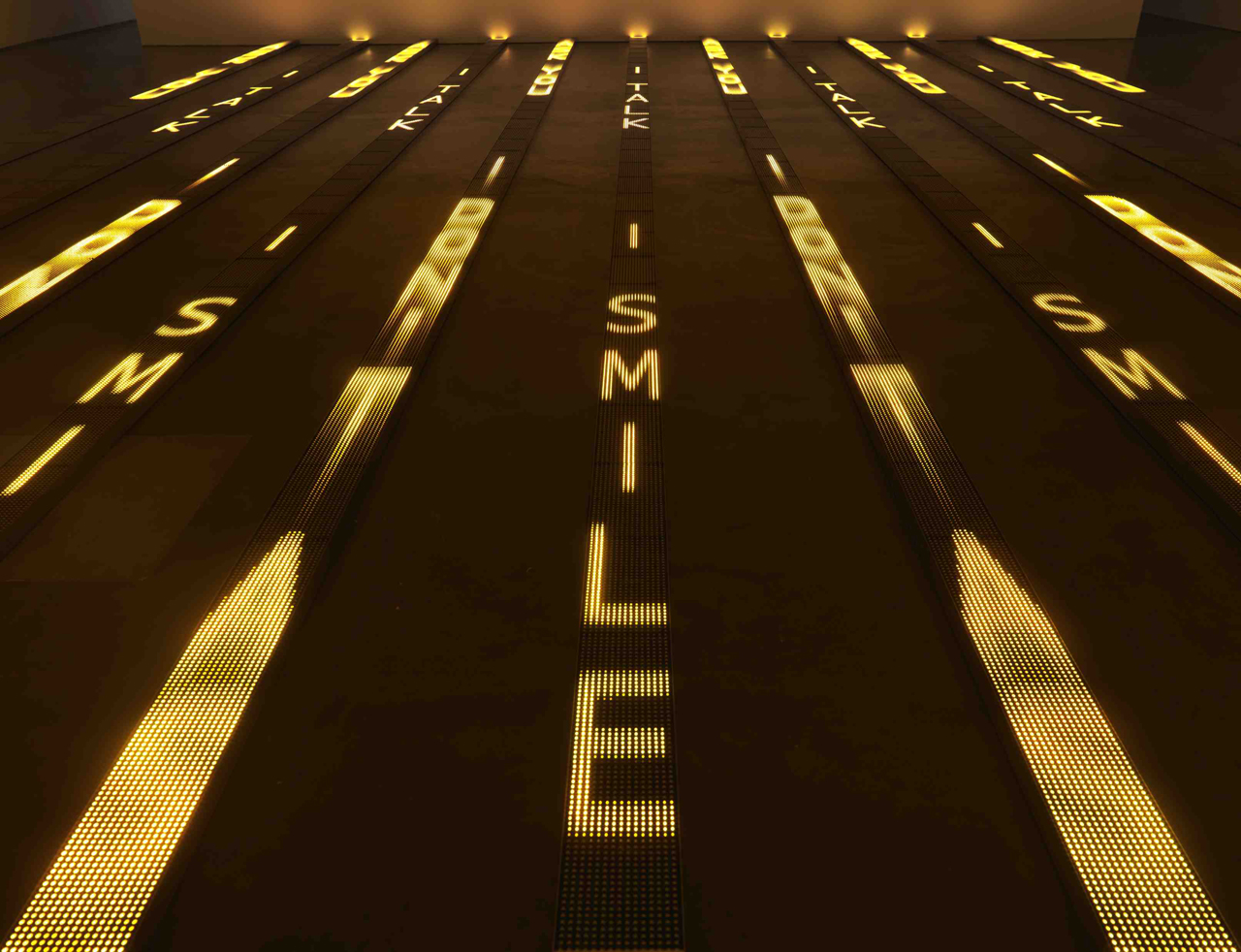

Marc Quinn

October 5 → January 6, 2008

Gathering over forty recent works, DHC/ART’s inaugural exhibition by conceptual artist Marc Quinn is the largest ever mounted in North America and the artist’s first solo show in Canada

The PHI_portal, a cross-border participatory installation, appeared on the PHI Centre's main floor in the spring of 2020. One year later, despite Montreal's lockdowns related to the COVID-19 pandemic, the program welcomed many initiatives creating cultural exchanges worldwide through the lens of community and arts. The second season, which took place during the fall of 2020, resulted in intimate, audience-less connections. Lucas LaRochelle, one of the PHI_portal's curators, instigated a series of connections about artificial intelligence and ethics with Rouzbeh Shadpey, Moisés Horta Valenzuela, and Suzanne Kite. In this article, they share some of the ideas that were explored during these workshops and conversations.

The rapidly increasing ubiquity, and in turn misuse, of artificial intelligence within the architectures of everyday life necessitates critical intervention to confront the harm caused by the biases built into these technologies. The Google Photo algorithm labeling images of Black people as “gorillas”, the Amazon recruiting tool downvoting resumes containing the word “women’s”, or the documented racial bias that underpins predictive policing tools like PredPol, are but a few examples in a sea of evidence that makes clear the disastrous effects of allowing the deployment of AI to go unchecked. Artists are at the forefront of not only making this technological violence visible, but radically reimagining how we might more ethically coexist with artificial intelligence in the near future.

Last fall, I invited artists working through issues like these to be a part of Situated Intelligence, a programming initiative exploring the intersection of artificial intelligence, artistic production, and ethics through the means of technical workshops and critical conversations. The programming took place within the platform of the PHI_portal; an intimate public art installation connecting communities across 50 cities — from Milwaukee to Erbil to Bamako — through immersive livestream technology. Situated Intelligence was developed in collaboration with Kabakoo Academies, a Bamako-based organization that works to catalyze sustainable futures by integrating local knowledge systems within technology education. Students undertaking an artificial intelligence course at Kabakoo Academies joined the conversation through the Bamako_portal, a sister site of the PHI_portal facilitated by curators Mariam Sylla and Talhata Zourkaneini Toure. Three invited artists–Moisés Horta Valenzuela, Suzanne Kite, and Rouzbeh Shadpey–joined virtually from their respective homes in Berlin, Tulasi/Tulsa, and Tiohtià:ke/Mooniyang/Montreal, to share their practices and perspectives with the Kabakoo students.

The first workshop was led by Moisés Horta Valenzeula, a Berlin-based sound artist, technologist and electronic musician from Tijuana, México. Valenzuela works within the fields of computer music, artificial intelligence, and the history and politics of digital technologies. His workshop, Forging Transcultural AI Music Futures, introduced participants to open source audio style transfer tools while engaging in a discussion about strategies for developing decolonized AI practices. For Valenzuela, “the clearest explanation of a decolonial AI practice is that you're not serving the interests of the institution, you're serving your community.”

Valenzuela’s interest in machine learning emerged as a means to explore an “otherwise” to Westernized portrayals of AI, which traditionally center themes of automation, optimization, and dystopian techno-governance. “How do you think about AI without falling into these cliches? How do you implement your own culture into it?” It is a powerful gesture to frame the Western-centric and white supremacist tendencies of dominant depictions of artificial intelligence as a cliche. In doing so, Valenzuela drains these AI practices of their aesthetic relevance, demanding that we imagine computational systems rooted in culturally situated knowledge.

The second workshop in the series was led by Suzanne Kite, a Tulasi/Tulsa-based Oglála Lakȟóta performance artist, visual artist, and composer whose practice investigates and activates contemporary Lakota epistemologies through research-creation, computational media, and performance. Kite’s workshop, Nonhuman Futures: Imagining Indigenous Ethics for AI, introduced participants to her artistic and scholarly practices and led them through a futuring exercise (a systematic process for thinking about, drafting, and planning possible futures) to invite participants to imagine new technologies through ethical protocols. Speaking to the origins of her interest in working with artificial intelligence, Kite explains, “As I got into tools for building machine learning systems, I did so simultaneously with an exploration of Lakota epistemology. My question was: are there existing relationships and ethical guidelines for creating these systems? Can I draw from those to create my own, that would lead to thinking about AI in a more future-facing way?”

These questions have recently been elaborated in the essay ‘How To Build Anything Ethically,’ Kite’s contribution to the Indigenous Protocols and Artificial Intelligence Position Paper, in which she applies Lakota knowledge to the development of a protocol for constructing computational hardware through each phase of its lifecycle. Central to this work is acknowledging that an effective intervention into the ethical creation of artificial intelligence must always begin by examining the production of the physical components that make computation possible. Kite is hopeful that more ethical practices are within reach, explaining that “these are all very, very new tools, and the issues that plague them, in the material sense, are very fixable. I think we’re not that far away from biocomputing, we're not that far away from avoiding mining, and those relationships to the land are still repairable.”

The season’s final workshop was led by Rouzbeh Shadpey, a Tiohtià:ke/Mooniyang/Montreal-based transdisciplinary artist, whose current work focuses on the invisibly-ill body, thresholds of nonexistence, and the AI voice. His workshop, Those Voices Without Breath: Thinking the AI Voice, began by unpacking the Euro-centricity of the sound studies canon and unfolded into a discussion on the ethics of AI-produced vocal clones. Shadpey argues, “the metaphor of the voice as being a source of agency, is horribly deceiving. Using AI to create a perfect replica effectively undoes the narrative that the voice is the seat of power and agency.”

The rise of the ever more convincing deepfake has underscored a decreasing ability to assign a truth value to digitally rendered material. The proliferation of AI-powered vocal cloning tools, such as the one developed by Montreal-based startup, Lyrebird, has raised questions about the ramifications of such technology in the production of fake news. Cognizant of this misuse, Lyrebird’s ethics statement closes with somewhat of a provocation: “we want to raise attention about the lack of evidence that audio recordings may represent in the near future.” Shadpey’s work interrogates these possible futures with particular precision, taking aim at the ethical stakes at play in granting persuasive agency to artificial speech, while many ‘real,’ embodied voices continue to be silenced. “What's harmful about this narrative is that it further displaces all of the voices, human and non-human, that have never counted.”

While working through aesthetic means, these artists share an outlook that is firmly set on interrogating the material implications of the misuse of machine learning technologies. Fresh on Kite’s mind is the recent unanimous vote by the Los Angeles Police Commission to enable the LAPD to use facial recognition technology, despite ample evidence that the datasets used to train these algorithms are racially biased, disproportionately targeting Black and Brown communities. “This will undoubtedly lead to the real, material, death of human beings,” Kite warns. “I hope that my work, which is at the intersection of AI and tech and art and ethics, can clarify that Indigenous AI means the repair of these (human/non-human) relationships. I hope that my work contributes towards the abolition of the use of these tools to police human beings.”

Abolition is a necessary gesture as increasing evidence of the White supremacist logic that underpins the design of AI systems accumulates. But as Ruha Benjamin, author of ‘Race After Technology: Abolitionist Tools for the New Jim Code’ notes: “Calls for abolition are never simply about bringing harmful systems to an end but also about envisioning new ones.” There are possibilities of more just futures that become clearer and more tangible with every crack made in the existing system. “Machine learning is obviously a potent tool when it is used critically”, Shadpey says, “It can function as a mirror to ourselves and lay bare the biases that we inculcate into various things. So for me, that's where the interest lies. That's where the power is.”

Through their work, artists like Shadpey, Kite, and Valenzuela lay the groundwork from which we might better imagine, build, and co-exist in a world increasingly interwoven with artificial intelligence. By centering community interests, building ethical frameworks rooted in Indigenous knowledge systems, and unearthing the narratives that underpin harmful bias, they prove that more ethical AI futures are not only possible but already emerging in the present. “I want to be making art that works through some of these issues in a Good Way, on the front end of things.” Kite shares, “We shouldn't have to spend all of our time catching the misuse of AI tools. That's too late. We need to be making tools in a Good Way.”

About the author

Lucas LaRochelle is a multidisciplinary designer, artist and researcher whose work is concerned with queer and trans digital cultures, community-based archiving, and co-creative media. They are the founder of Queering The Map, a community generated counter-mapping platform for digitally archiving LGBTQ2IA+ experience in relation to physical space.

Foundation

Gathering over forty recent works, DHC/ART’s inaugural exhibition by conceptual artist Marc Quinn is the largest ever mounted in North America and the artist’s first solo show in Canada

Foundation

Six artists present works that in some way critically re-stage films, media spectacles, popular culture and, in one case, private moments of daily life

Foundation

This poetic and often touching project speaks to us all about our relation to the loved one

Foundation

DHC/ART Foundation for Contemporary Art is pleased to present the North American premiere of Christian Marclay’s Replay, a major exhibition gathering works in video by the internationally acclaimed artist

Foundation

DHC/ART is pleased to present Particles of Reality, the first solo exhibition in Canada of the celebrated Israeli artist Michal Rovner, who divides her time between New York City and a farm in Israel

Foundation

The inaugural DHC Session exhibition, Living Time, brings together selected documentation of renowned Taiwanese-American performance artist Tehching Hsieh’s One Year Performances and the films of young Dutch artist, Guido van der Werve

Foundation

Eija-Liisa Ahtila’s film installations experiment with narrative storytelling, creating extraordinary tales out of ordinary human experiences

Foundation

For more than thirty years, Jenny Holzer’s work has paired text and installation to examine personal and social realities